Do you know with so much visual content shaping user behavior, apps like Google Lens are proving to be game changers in how we use and interact with information? Google Lens is an AI- and computer vision-powered app that enables users to identify objects they see, scan text, translate languages, and explore their surroundings using their smartphone’s camera. By 2025, the visual search market is projected to reach $40 billion (Markets and Markets).

With the growing demand for intuitive, image-based experiences, businesses are now exploring similar app solutions to create differentiation from their competitors. In this blog, we will discuss the essential features, development costs, and technologies required to create an app like Google Lens – a functional and effective solution in today’s visual world.

What is Google Lens?

Google Lens is a Google image recognition technology that allows you to use your camera to “see” and engage with the world around you. The Google Lens on-demand app development solutions can identify objects, translate text, solve math problems, and recognize visually similar items.

It was officially launched on October 4, 2017. As of January 2021, the standalone Google Lens app had been downloaded over 500 million times on the Play Store and is integrated into Google Photos, Google Assistant, and as a feature in the Google Camera app.

Why Develop a Social Media App Like Google Lens?

Social network mobile app development in UAE, like Google Lens, is one way to combine a group-sharing app with visual artificial intelligence. Here are five advantages, supported by market evidence that will help you know the exact reasons to create an app like Google Lens.

Taps Into The Growing Visual-Search Market

The trend in the visual search market, particularly for younger generations, is real. MarketsandMarkets points out this will be a $40 billion industry by 2025. With AI to help visually identify an object, a landmark, or a product and then assist people in sharing what they have discovered, your app becomes both functional and a social experience.

Increases User Engagement Through Smart Content Discovery

Users can visually explore their surroundings and share their findings with others, adding another dimension to the discovery experience and making it both interactive and potentially addictive. A HubSpot study also found that visual content is 40 times more likely to be shared than other types of content.

From there, a GowthLens (or similar) will allow individuals to take photos (or scans) within the app and make them searchable, shareable content in users’ discover tab, driving organic engagement for potential new user acquisitions.

Increases Monetization Possibilities Through Visual Commerce

Visual shopping innovations are changing e-commerce. Pinterest also reports that 80% of users initially began as visual searchers before making a purchase. Combining your app with a participating brand or integrating an affiliate link will enable users to identify and buy products directly from their images, generating product advertisers a new level of product exposure and additional revenue channels.

Encourages User-Generated Content and Virality

Using AI-assisted tagging, content suggestions, and additional features through an app, the opportunity exists to create smarter, richer posts. According to Statista, in terms of engagement, UGC achieves 6.9x more engagement than content created by a brand. Google Lens powers the app with the purpose of incentivizing content creators to create more content by scanning through AI-based insights and via social share options.

Aligns with AI-Powered Personalization Trends

Artificial intelligence development services allow hyper-personalized feeds, suggestions, and friend recommendations. A Salesforce (The Future of Commerce: Why Customers Stay) study found that 62% of consumers expect brands to understand their needs by providing personalized experiences.

Therefore, a Google Lens-type app will enable visually analyzing whether the user has preferences, thereby making content discovery and connections much more relevant to the end user.

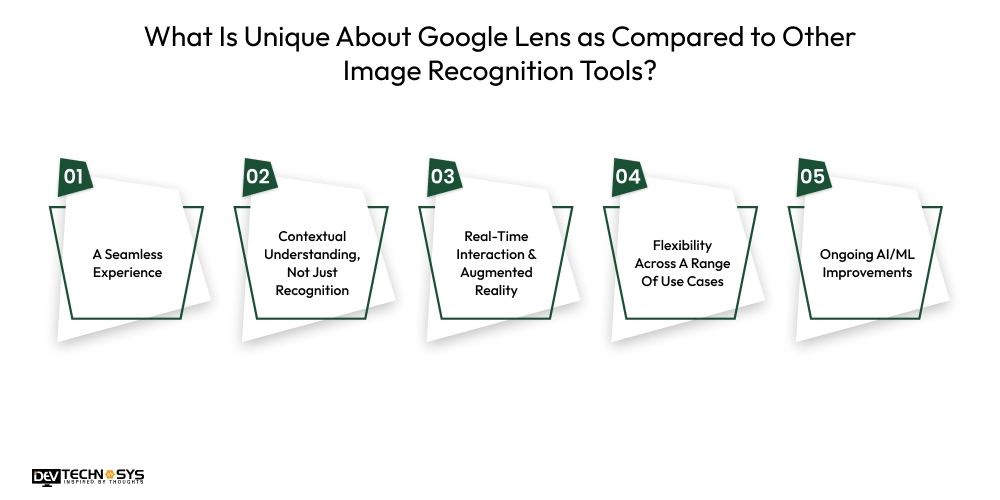

What Is Unique About Google Lens as Compared to Other Image Recognition Tools?

Google Lens is not just another image recognition tool it is a powerful bridge between the physical and digital world by allowing us to instantly access information. So, what is utterly unique about Lens that captures the attention of both entrepreneurs and users?

A Seamless Experience:

Lens is less of a stand-alone tool and more of a woven detail into Google Photos, Google Assistant, and Chrome. Since it is an embedded feature, it allows you to use it without much effort from different touchpoints.

Since it will also leverage the vast knowledge graph that Google has built outside the app, it will return incredibly rich, relevant results. From the entrepreneur’s perspective, it has the potential for a massive user base and built-in data insights.

Contextual Understanding, Not Just Recognition:

Lens is not just an object recognizer. It understands the context of your vision. The point of your device at a restaurant sign is that it can not only read the text but can pull up the reviews, menus, and directions. The ability to use contextualization as intelligence provides actual utility that delights users.

Real-Time Interaction & Augmented Reality:

The power to live translation of text on a sign, instant replies on scanning homework problems, or instant info on landmarks in your camera view is revolutionary. The combination of real-time AR interaction with static images gives the user an interactive gateway to location-based info that can be an engaging, “sci-fi” experience that delights users.

Flexibility Across A Range Of Use Cases:

Some competitors choose to focus their app (e.g., fashion or plants), but Lens is truly a multi-tool. From shopping to education to travel to accessibility, Lens offers a broad utility that can appeal to a wide range of daily experiences. This unprecedented flexibility creates stickiness for users while opening unlimited monetization options for developers.

Ongoing AI/ML Improvements:

Lens is improving all the time, with the enormous investment in AI and machine learning that Google has made in its R&D, Lens is improving and becoming more accurate. Lens is taking advantage of computer vision, and as the accuracy improves, more useful results and experiences are being delivered.

Google’s ongoing investment in AI means that Lens will be a future-proof platform with infinite possibilities for future features.

6 Stages to Create An App Like Google Lens

It’s time to know the process to create an app like Google Lens which isn’t an easy task, mainly because of the amount of AI and machine learning required for image recognition. However, using a hybrid app development company to build an app like Google Lens is much easier. Below are six stages of building an app:

Idea Development and Market Research:

The first thing you need to do is define the basics of the app (its core function) and who the user is. Are you building an app for product recognition, a text translator, etc? The Android and iOS app development company will do market research to establish if your idea is validated and explore competitors and unique selling points for your app, so you can make sure the app will meet a real market need.

Features Definition and Technical Specification:

After the idea has been validated, you will define the features you want in the app (Minimum Viable Product – MVP) and other things that may be added later. Defining the image recognition particulars, which AI model to use, how to integrate an API (e.g., Google Cloud Vision AI, Amazon Rekognition), and how it interacts with the device hardware, etc. The technical specification document will be used as a “blueprint” for the Google Lens app Development in UAE.

UI/UX Design:

In this stage, designers will develop a user experience that is simple and visually appealing. Hire dedicated developers to create the wireframe designs and prototypes for the workflows and screens of the app.

A smooth camera user experience, clear results presentation, and an easy navigation system will be critical for an app like Google Lens. The development company will focus on a design that limits the potential for friction between potential actions, and provides a smooth camera and user experience.

Backend Development & AI/ML:

The backend development and AI/ML aspect encompasses the most critical activities or source of investment required for an app like Google Lens. The development team will build the roadmap and infrastructure for backend development and will provide all of the necessary functionality for image processing, data storage, and the various AI/ML models that require a robust inference engine for object recognition, OCR, and every other feature of the app.

The backend development of a project in this realm typically includes significant work with machine learning app development frameworks and cloud services.

Front-end Developers & QA:

The front-end development team will build the mobile application for iOS and/or Android based on the approved UI/UX design. The Android app development services provider will also conduct extensive Quality Assurance (QA) testing.

The QA testing will encompass functional, usability, performance, security testing, and the extensive testing for a Lens-like app will be recognition accuracy and recognition speed, for multiple recognition scenarios like: image to text, image(s) to image recognition, and user to image recognition.

Deployment, Launch & Ongoing Support:

In the last stage to create an app like Google Lens, after extensive beta testing and bug fixes, the development company will handle the deployment of the app into their respective app stores (Google Play Store and Apple App Store) while ensuring compliance.

The Google Lens app development company continues to provide ongoing app store maintenance during launch, regular bug fixes, performance monitoring, and updates based on customer feedback or technology enhancements.

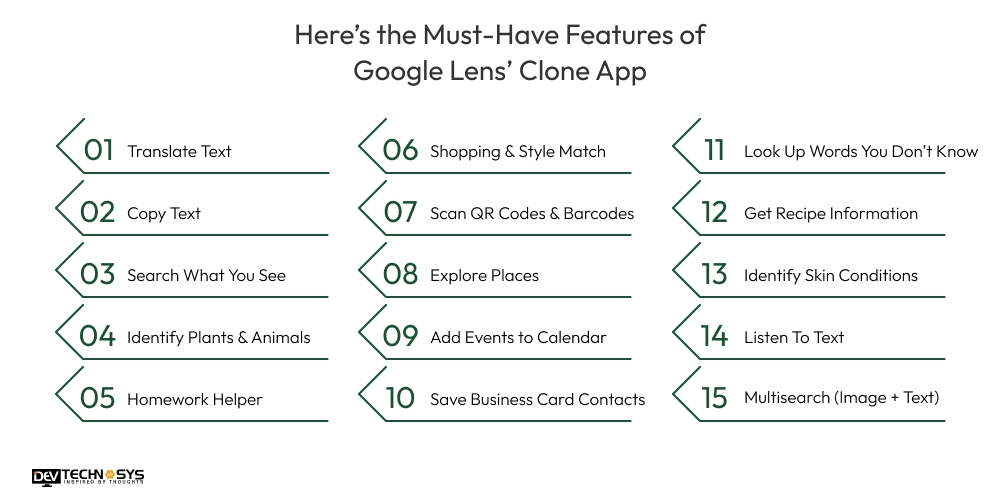

Here’s Must Must-Have Features of Google Lens’ Clone App

It’s known for its amazing functions of Google Lens, now that you know the exact Google Lens app development. Here it is.

Translate Text:

The AI-powered camera app instantly translates foreign language text in real-time just by pointing your camera at a sign, menu, or document and overlaying the translation on top of the original text.

Copy Text:

You can select and copy printed or handwritten text from any images in the physical world directly to your phone’s clipboard for easier pasting or sharing.

Search What You See:

The AI image processing app points your camera to identify any object, like a building, a product, or a piece of art, and it will return relevant search results.

Identify Plants & Animals:

Ever wonder what that flower or dog breed is? Google Lens identifies all kinds of plants and animals and will share details about the species and characteristics, etc.

Homework Helper:

If you are stuck on a math problem, history question, or science concept, Lens can scan it and give you step-by-step information, videos, and related web results.

Shopping & Style Match:

If you see an outfit, furniture, or home decor item that you like, Lens can help you find similar items online to compare prices and find out where to buy them.

Scan QR Codes & Barcodes:

Go ahead and scan QR Codes, so that you can access the websites or information. You can also scan product barcodes to retrieve details, reviews, and shopping options.

Explore Places:

Obtain information on landmarks, restaurants, and shops around you, such as ratings, hours, historic information, and popular dishes.

Add Events to Calendar

The photo analysis mobile app scans event flyers or billboards, and Lens will automatically pull off event details and recommend that you add it to your calendar.

Save Business Card Contacts

The camera-based search app aims the camera at the business card, and Google Lens will pull off the necessary contact information and save it directly to your phone’s contact list.

Look Up Words You Don’t Know

Highlight words in a book or document with Lens and it will return the definitions, pronunciation, and other search results for quick learning.

Get Recipe Information

The smart image search tool scans ingredients that you have, or dishes on a menu, and Lens will give reviews, dietary information, or even show popular dishes using Google Maps.

Identify Skin Conditions

The Visual Search Engine app, which uses visually similar images on the internet, can help you identify and learn about skin ailments by scanning them with the camera lens. (Informational only – NOT A DIAGNOSIS).

Listen To Text

Once text is scanned, you can tap a Listen button to have the text read to you, useful to learn pronunciations or for accessibility.

Multisearch (Image + Text):

The AR camera app development solutions offer a search experience with images and text, so you could take a picture of a shirt you like and add the word “blue” to search for similar shirts in that color.

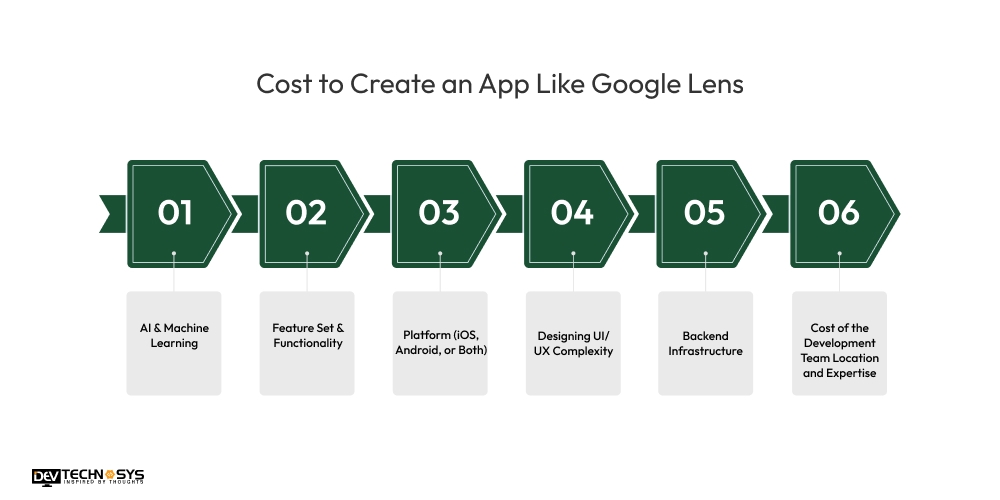

Cost to Create an App Like Google Lens

The cost to create an app like Google Lens varies from $8,000 to $30,000. It requires significant investment, especially in all the AI and machine learning capabilities. You should also hire a software developer to build a basic version starting from $8,000, but if you want a full-blown app with all the bells and whistles, it could easily cost you 30,000+ dollars to develop. Six main issues will affect the cost to create an app like Google Lens.

Cost Factor | Impact on Cost | Cost Range Contribution |

| AI & Machine Learning | High | $3,000 – $10,000+ |

| Feature Set & Functionality | High | $2,000 – $7,000 |

| Platform (iOS, Android, or Both) | Moderate to High | $1,500 – $5,000 |

| UI/UX Design Complexity | Moderate | $1,000 – $3,000 |

| Backend Infrastructure | High | $2,000 – $6,000 |

| Development Team Location & Expertise | Variable | Can influence total cost greatly |

AI & Machine Learning:

This is probably your biggest Image recognition app development cost factor. Google Lens uses advanced computer vision and complex deep learning models for object recognition, Optical Character Recognition (OCR), and contextual understanding. I

f you want to develop your own highly accurate computer vision models, it can get very expensive, because you need to collect data, train the models, and continue to improve them. You can also reduce costs by using existing APIs (like Google Cloud Vision AI), but you will probably have usage fees for the service.

Feature Set & Functionality:

The more features that you add, the more the Cost to create Google Lens clones will increase. A basic “identify object” feature costs less than real-time translation, shopping integration, homework help, or even complex AR (augmented reality) overlays. The more features, the more development work, design work, and testing time required.

Platform (iOS, Android, or Both):

Building for just one platform (iOS or Android) is less expensive than building cross-platform, especially building natively, where the amount of development effort is virtually doubled. Even if you build cross-platform, frameworks provide cost reductions but will not have the same native experience as building properly in a distinct coding language which may limit the highly optimized features/capabilities for performance-intensive things like real-time visual processing.

Designing UI/UX Complexity:

An optimal user experience (for a camera based app) is obviously going to be extremely visually rich, seamless, and intuitive taking more total design hours, and creating highly customized animations, great screen flows to meet the aspect ratio of devices, and polished content when compared to designing based on generic templates will increase cost to develop an app.

Backend Infrastructure:

An app like Google Lens will have vast amounts of incredible data, requiring the backend to be vast and scalable to ingest images and associated data, plus run AI models on the data. This part of the Google Lens clone development cost includes servers, databases, APIs, potential subscriptions to cloud services, and the costs for the design and development, plus the ongoing maintenance, will continue to add to the total cost to create an app like Google Lens.

Cost of the Development Team Location and Expertise:

Image recognition app development company hourly rates vary greatly depending on the geographical location. Developers from North America or Western Europe are going to be significantly more expensive ($80-$200+/hour) than Eastern Europe ($40-$80/hour) or India ($20-$50/hour), to provide a specific example.

If the user has an AI degree with symbolic Semiotics, or any specified niche area you require in human capital, it ultimately reduces the per-hour cost.

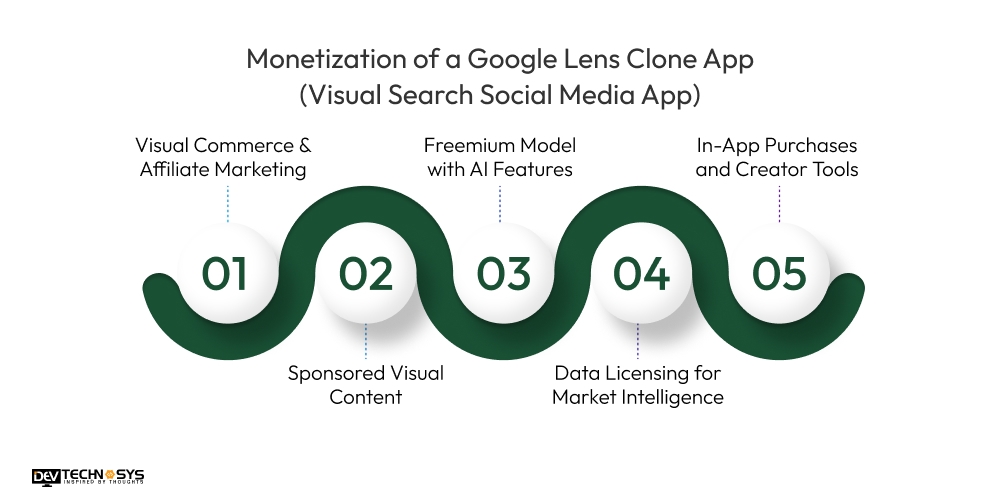

Monetization of a Google Lens Clone App (Visual Search Social Media App)

A Google Lens-type app with social elements offers different paths to monetization. Here are two ideas for how to build lasting revenue from a visual AI consumer product.

Visual Commerce & Affiliate Marketing

Let users take their own photos of products – like clothes, gadgets, or home decor – using images of real-world objects, and quickly retrieve purchase links from partner eCommerce platforms like Amazon, Flipkart, or Shopify.

Each time there is a sale as a result of clicking on links to your partner platforms, you’ll earn a commission from the affiliate sale. Not only do you improve consumer engagement and user experience, but you also seamlessly lead them down a monetized shopping journey from right within the App.

There is a loyal and eager user base just waiting for you to engage by looking at Retail Dive, which states, “75% of consumers report using a visual search tool to find products prior to purchase, which indicates high user intent and potentially high conversion rates available for monetization”.

Sponsored Visual Content

Brands can pay for visual placements in the results from searches. For example, a user scans a backpack, and a listing appears at the top of the results from a sponsored position from backpack brands.

The listing could appear at the top of the search screen, similar to ads generated from Google above organic search results. Brands can be charged a cost-per-click (CPC) or cost-per-impression (CPM) based on what level of engagement they want to pay.

According to eMarketer, digital ad spending in visual platforms is forecasted to reach $63 billion by 2025 globally, which is a strong opportunity for monetization.

Freemium Model with AI Features

The Text scanner app provides users with some core functions of their visual search and basic social functions for free to gain a wide audience. Charge users a monthly or yearly subscription for higher-end features, like multi-object scanning, offline lens use, HD image scanning, or smart search history.

This allows users to explore your app and charge power users enough to be bucks in your pocket.

Many apps like Pinterest and Snapchat already monetize premium tools and business functions that you could duplicate in their freemium model.

Data Licensing for Market Intelligence

Ask users to opt-in to collecting their visual search data (keeping privacy requirements in mind). With consent, you can collect the audience visually searching, general trends, and behavioral data especially through platforms or tools like Google Meet. This anonymized data can then be sold or licensed to retailers, brand strategists, market researchers, or product developers in real time, allowing them to stay ahead of their competition by leveraging crowdsource customer interest visuals and exploring the landscape, brand presence, and behaviors of visual search users.

The data monetization market is forecast to reach $6.1 billion globally by 2025 (Allied Market Research), indicating the significant value in data as a service.

In-App Purchases and Creator Tools

Have a marketplace in your app for your creators and influencers to buy AI filters, smart tagging tools, content enhancement packs, or promotional boosts. This will generate a revenue stream while encouraging content creation. Users who want greater visibility, or to improve the aesthetic of their content, can purchase from it as microtransactions.

Businesses may want to pay for analytics or to unlock community engagement tools. This would follow the model that has been proven to be successful on social platforms such as TikTok and Instagram, where creators spend in order to stand out.

Technology Stack for Google Lens Alternative

Now that you know the Image recognition app development cost you must know about technology stack that also affects the overall budget.

Layer | Technology/Tool | Purpose |

| Frontend (Mobile) | Flutter / React Native / Swift (iOS) / Kotlin (Android) | Cross-platform or native app development for iOS and Android |

| Frontend (Web) | React.js / Vue.js | Web interface for admin or social interactions |

| Backend | Node.js / Python (Django, Flask) / Ruby on Rails | Server-side logic, APIs, authentication, user data management |

| Database | PostgreSQL / MongoDB | Storage of user profiles, posts, tags, product data |

| Cloud Storage | AWS S3 / Google Cloud Storage | Store images, scanned content, and media files |

| AI/ML Engine | TensorFlow / PyTorch / OpenCV | Image recognition, object detection, and visual analysis |

| Visual Search API | Google Cloud Vision / Amazon Rekognition / Custom ML Model | Extract and identify visual elements from photos |

| Search Engine | Elasticsearch / Apache Solr | Fast retrieval of matched images, objects, or product info |

| Authentication | Firebase Auth / Auth0 / OAuth 2.0 | Secure login, social login, session handling |

| Real-Time Features | Firebase Realtime DB / Socket.io | Live updates, chats, notifications |

| Push Notifications | Firebase Cloud Messaging / OneSignal | Sending user alerts, updates, and engagement prompts |

| Analytics | Google Analytics / Mixpanel / Firebase Analytics | User behavior tracking, performance monitoring |

| Deployment & CI/CD | Docker / Kubernetes / Jenkins / GitHub Actions | Continuous integration, scalable deployment |

| Hosting | AWS (EC2, Lambda) / Google Cloud Platform / Azure | Hosting backend servers and ML models |

How Dev Technosys Can Help You Create An App Like Google Lens?

Dev Technosys is a leading mobile app development company in the UAE with unparalleled experience in AI, computer vision, and mobile app development, helping to turn your vision of a visual search app into a market-ready solution. We have a proven portfolio of successful tech projects and a track record of delivering high performance, scalability, and exceptional user experience.

We stay current with trends and continually integrate the latest features and technologies to maximize the benefits of your project. Let us be your trusted technology partner, whether you’re a startup or an enterprise, to build an intelligent, future-ready app like Google Lens, enabling you to observe engagement and drive growth.

FAQ

1. What Is Google Lens And How Does It Work?

Google Lens is an app that provides information on recognized objects, texts & scenes based on artificial intelligence and a computer vision solution that recognizes in real-time using a smartphone camera.

2. How Much Does It Cost To Build An App Like Google Lens?

The cost to develop an image recognition app like Google Lens can be anywhere from $8,000 to $30,000 based on the desired features, complexity of AI, choice of platform, and location of development.

3. What Features Should A Google Lens Clone Have?.

The Computer vision app for mobile offers key features, including real-time object recognition, text scanning, picture translation, product search, AR integration, visual search history, and the option to share on social media is a helpful bonus.

4. What Technologies Are Used To Make An App Like Google Lens?

Core technologies are used to make an app like Google Lens, which includes TensorFlow, OpenCV, React Native, Node.js, Google Cloud Vision, and any technology for cloud storage, real-time updates, and a visual search API.

5. Is It Possible To Monetize A Google Lens-Style App?

Yes, monetization strategies could be through affiliate links for products, visual commerce links to products directly, high-end premium AI features, licensed data, and sponsored visual content in partnerships with brands or eCommerce platforms.

6. How Much Time Does it Take to Build an App Like Google Lens?

To develop an app like Google Lens, it can take 3 to 6 months, depending on complexity, team expertise, and features such as AI integration, real-time image recognition, and platform support.